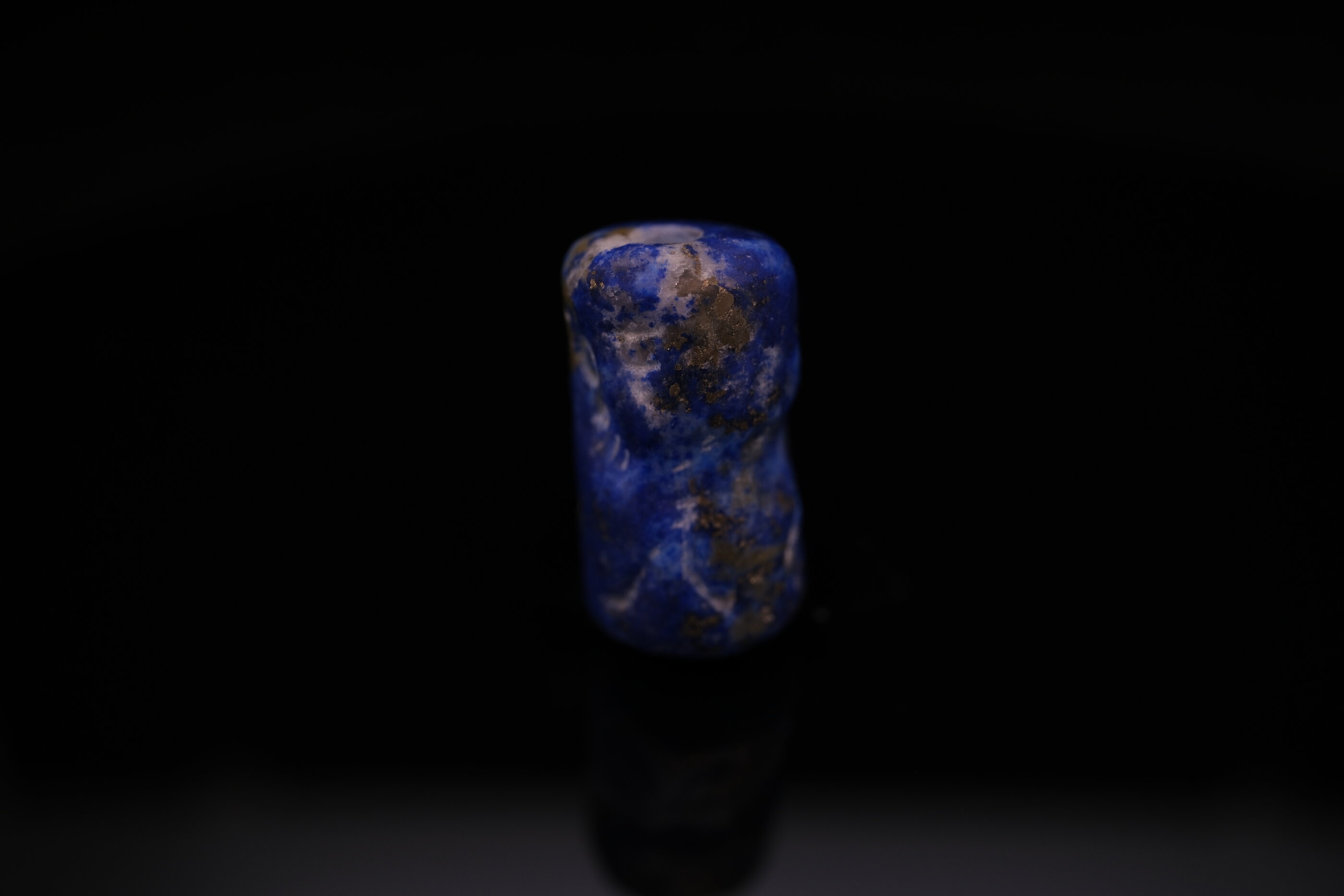

A scribe in Uruk, around 3200 BCE, pressed a cylinder seal into wet clay to mark a shipment of barley. He could not have imagined that five thousand years later, his gesture—rolling a carved stone to create a unique, verifiable mark—would be echoed in digital signatures, cryptographic hashes, and blockchain transactions. The technology changed. The problem didn’t.

We live in exponential times with linear minds.

The Straight Line Illusion

Human intuition evolved for a world of gradual change. Our ancestors needed to predict where a thrown spear would land, when the river would flood, how many days until the fruit ripened. These problems are linear: cause follows effect in predictable proportion.

But technological progress doesn’t work this way.

When asked to estimate exponential growth, humans consistently underpredict. Show someone a graph doubling every year and ask where it will be in ten years. They’ll guess maybe 20x. The actual answer is 1,024x.

This isn’t stupidity. It’s biology. Our neural hardware runs linear approximations because, for most of human history, they were close enough.

They aren’t anymore.

The Pattern Repeats

History shows a recurring pattern: a technology emerges that multiplies human capability, society initially underestimates its impact, then struggles to adapt as change accelerates beyond prediction.

Writing: The First Information Technology

Around 3400 BCE, in the cities of southern Mesopotamia, administrators faced a problem: how to track goods, debts, and transactions across a complex urban economy. The solution was cuneiform—wedge-shaped marks pressed into clay—and the cylinder seal, a carved stone that created a unique, unforgeable signature when rolled across the clay surface.

What happened next wasn’t linear. Writing didn’t just help administrators count barley. It enabled law codes, literature, mathematics, astronomy, and historical memory. It allowed knowledge to accumulate across generations rather than dying with each scholar. The invention of writing didn’t add to human capability—it multiplied it.

The Sumerians who carved the first cylinder seals could not have imagined the Epic of Gilgamesh, the Code of Hammurabi, or the astronomical tables of Babylon. They were solving a bookkeeping problem. They were inventing civilization.

Printing: The Knowledge Multiplier

Johannes Gutenberg introduced movable type to Europe around 1440. The immediate use case was obvious: produce Bibles faster and cheaper than hand-copying. A reasonable person in 1450 might have predicted that printing would make books slightly more available to wealthy institutions.

What actually happened:

Within a century: the Protestant Reformation (enabled by pamphlet distribution), the Scientific Revolution (enabled by shared research), the spread of literacy beyond clergy, and the eventual collapse of information monopolies that had governed society for a millennium.

The Church, which had controlled the production and interpretation of texts for centuries, understood immediately that something dangerous was happening. But understanding didn’t help. You can’t unpublish a book. You can’t un-spread an idea. The technology had escaped.

The Current Moment

We are living through another such transition. The technology this time is artificial intelligence—specifically, the emergence of language models capable of generating human-quality text, code, and reasoning.

The initial framing was modest: chatbots that could answer questions, coding assistants that could complete functions, writing tools that could draft emails. Useful, certainly. Revolutionary? The consensus in 2022 was skeptical.

Then the curve bent upward.

The Feedback Loop

The breakthrough isn’t just better models. It’s what happens when AI systems start improving AI systems.

Consider the development pipeline for software. Traditionally: human writes code, human reviews code, human tests code, human deploys code. Each step requires human attention, human time, human judgment. The throughput is limited by human bandwidth.

Now introduce AI at each step. AI drafts code. AI reviews code. AI writes tests. AI identifies bugs. Humans supervise, but the bottleneck shifts. Where once a team might complete ten features per month, they might now complete fifty. The same humans, amplified by AI collaborators, produce more.

But here’s where it gets interesting. This amplification applies to AI development itself.

AI systems help researchers write papers, analyze results, generate hypotheses, and debug training runs. The tools that build AI are themselves improved by AI. This is the feedback loop that technologists have discussed theoretically for decades—and it’s now happening.

The Ralph Loop

A curious insight has emerged from the practice of AI-assisted development: you don’t always need the smartest model. Sometimes you need many adequate ones.

The pattern goes like this: rather than waiting for a single genius AI to solve a complex problem, you spawn dozens of simpler agents, each exploring a different approach in parallel. Most fail. Some succeed. The successes are combined, evaluated, refined. The collective intelligence of many “Ralph Wiggums”—each individually limited but together covering vast solution spaces—outperforms single sophisticated attempts.

This inverts a long-held assumption in AI development: that progress requires ever-more-powerful individual models. It turns out that architecture—how you orchestrate many agents—matters as much as raw capability. A well-coordinated swarm of modest models can outperform a single brilliant one.

The implications ripple outward. If parallel exploration beats serial genius, then the constraint on AI capability isn’t just model quality—it’s our ability to orchestrate agents effectively. And that’s a problem humans are good at solving.

The Singularity Question

The word “singularity” gets thrown around carelessly. In mathematics, a singularity is a point where a function becomes undefined—approaches infinity, divides by zero, ceases to behave predictably. In technological forecasting, it refers to a hypothetical moment when AI improvement becomes so rapid that human prediction becomes meaningless.

Are we approaching that point?

The honest answer: we don’t know. But the honest answer is also that we wouldn’t necessarily know if we were.

At 24 months with monthly doubling: 224 = 16,777,216x error

The scribes of Uruk couldn’t predict the Epic of Gilgamesh. Gutenberg couldn’t predict the Reformation. We may be equally blind to what AI enables.

What we can say: the feedback loop is real. AI systems are contributing to AI development. The pace of capability improvement has not slowed. And our linear intuitions are probably wrong about what happens next.

What the Objects Teach Us

Every ancient object represents a moment of technological sophistication that seemed permanent to those who made it. The bureaucrat pressing his cylinder seal into clay was using cutting-edge information technology. The mint worker striking a denarius was participating in a standardized economic system that spanned continents. They lived in the future, as we do now.

And yet their futures became our past. Their innovations became our antiques. Their exponential curves flattened into historical footnotes.

This isn’t pessimism. It’s perspective.

The technologies that transform civilization don’t announce themselves as transformative. They arrive as practical solutions to immediate problems—tracking grain shipments, printing Bibles, generating plausible text. The people deploying them don’t feel like they’re changing history. They feel like they’re doing their jobs.

The cylinder seal in my collection was a tool. Someone used it to sign documents, mark property, authenticate transactions. The carver who made it was solving a client’s problem, not inventing civilization. But civilization emerged anyway, from a million such practical acts multiplying across time.

We are living through another such multiplication. The models are tools. The developers are solving problems. And something is emerging that none of us fully understand.

Linear minds in exponential times.

We’ve been here before. The curve always wins.